A/B Testing: examples and best practices

8min • Last updated on Apr 20, 2026

Alexandra Augusti

Chief of Staff

Before rolling out a new campaign, redesigning a landing page, or changing a CTA, one question matters most: which version will actually perform better?

In digital marketing, product growth, and conversion rate optimisation, small changes can have a measurable impact on user behaviour. A different headline, page layout, button label, or email subject line can influence clicks, conversions, engagement, and revenue.

That is why A/B testing has become a core optimisation method. By comparing an original version with a new variation, businesses can understand which changes generate better results and make decisions based on data rather than assumptions.

Key takeaways

A/B testing compares two versions of the same element to identify which one performs better.

It is a controlled experiment based on random traffic distribution between a control version and a variation.

It can be used to optimise key KPIs such as conversion rate, click-through rate, engagement, revenue per visitor, and average order value.

Reliable results depend on clear hypotheses, sufficient sample size, proper test duration, and statistical significance.

A/B testing is most effective when integrated into a broader data-driven marketing and audience experimentation strategy.

What is A/B testing?

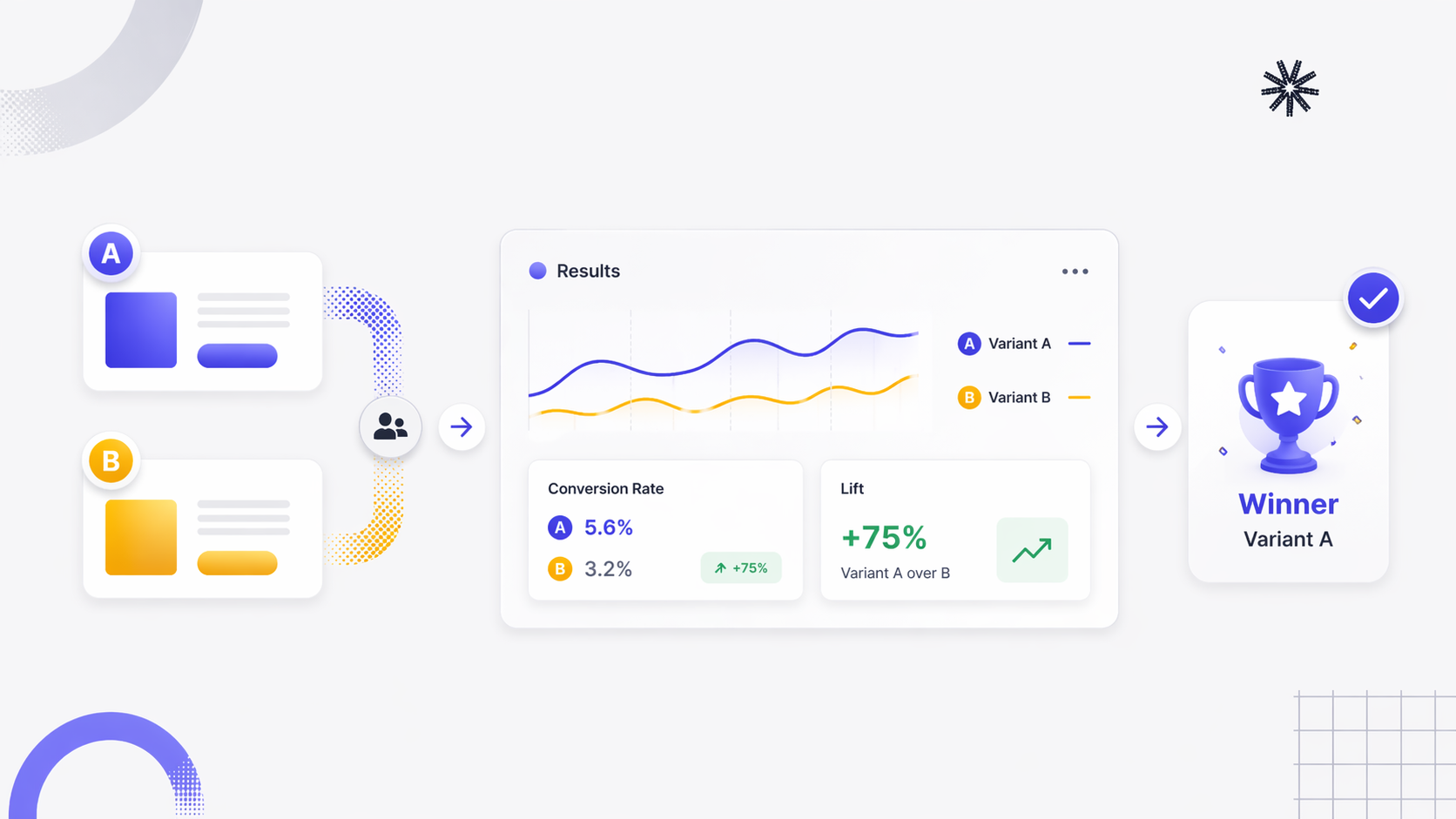

A/B testing, also known as split testing, is an experimentation method used to compare two versions of the same digital element in order to determine which one performs better.

In a standard A/B test, version A is the original version, also called the control. Version B is a modified version that includes a specific change. Traffic is then randomly split between the two versions so that teams can measure the impact of that change on user behaviour.

The goal is simple: identify which variation generates the best result according to a defined KPI.

In practice, however, A/B testing is much more than a simple comparison. It is a structured method used to validate hypotheses, improve website performance, optimise conversion paths, and support better decision-making across marketing and product teams.

Because it isolates the impact of a change, A/B testing helps businesses understand what actually influences user actions rather than relying on instinct, internal opinions, or design preferences.

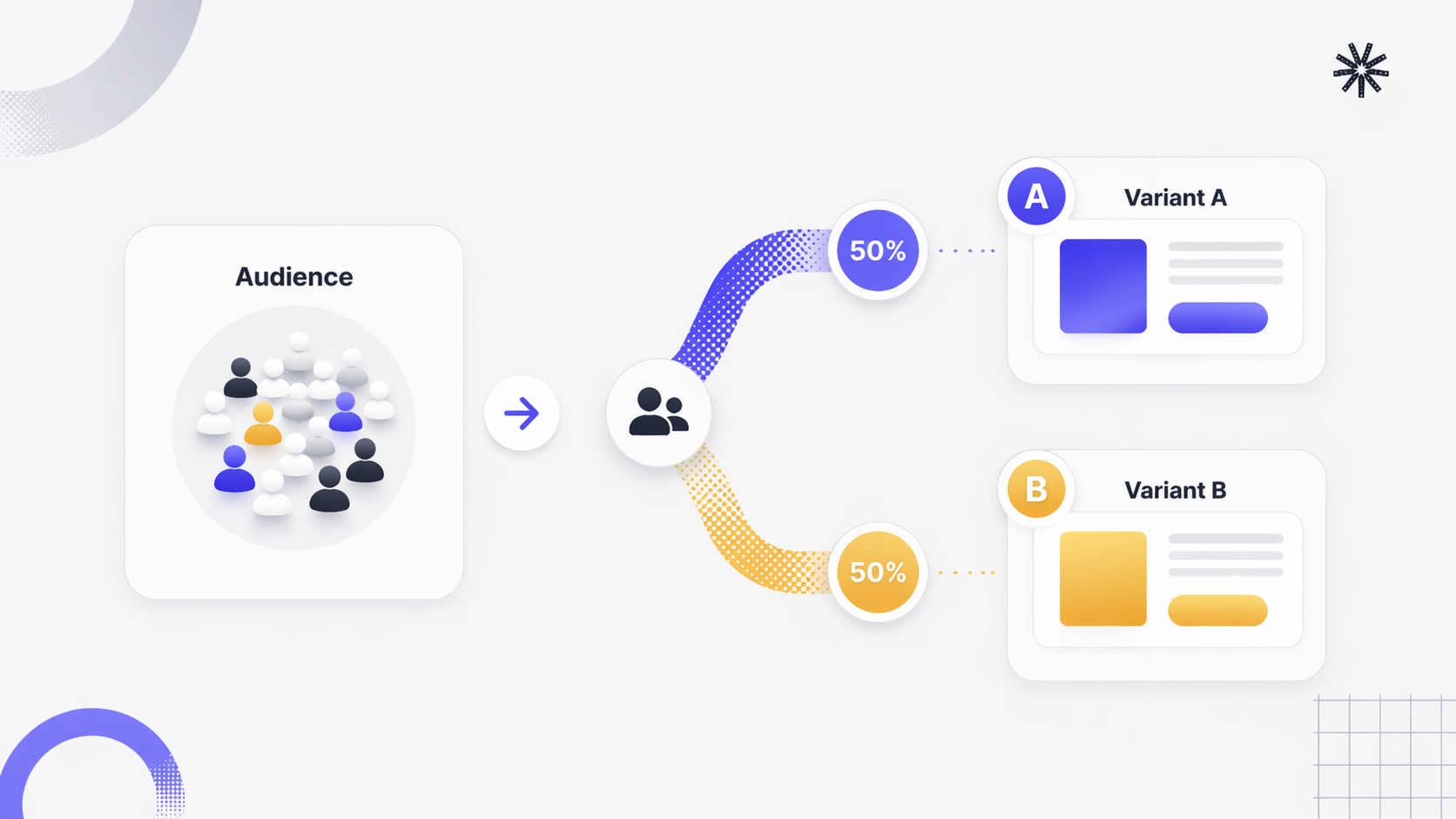

AB Testing Principle

What can you A/B Test?

A/B testing can be applied to almost any element that affects the user experience or conversion journey. Common examples include:

website pages and landing pages

headlines and value propositions

CTA buttons

forms and checkout flows

product page layouts

navigation menus

images and visual hierarchy

email subject lines and email content

ad copy and creatives

promotional offers and pricing displays

The principle remains the same in every case: compare an original version with a new version that includes one targeted change, then measure the difference in performance.

How does an A/B test work?

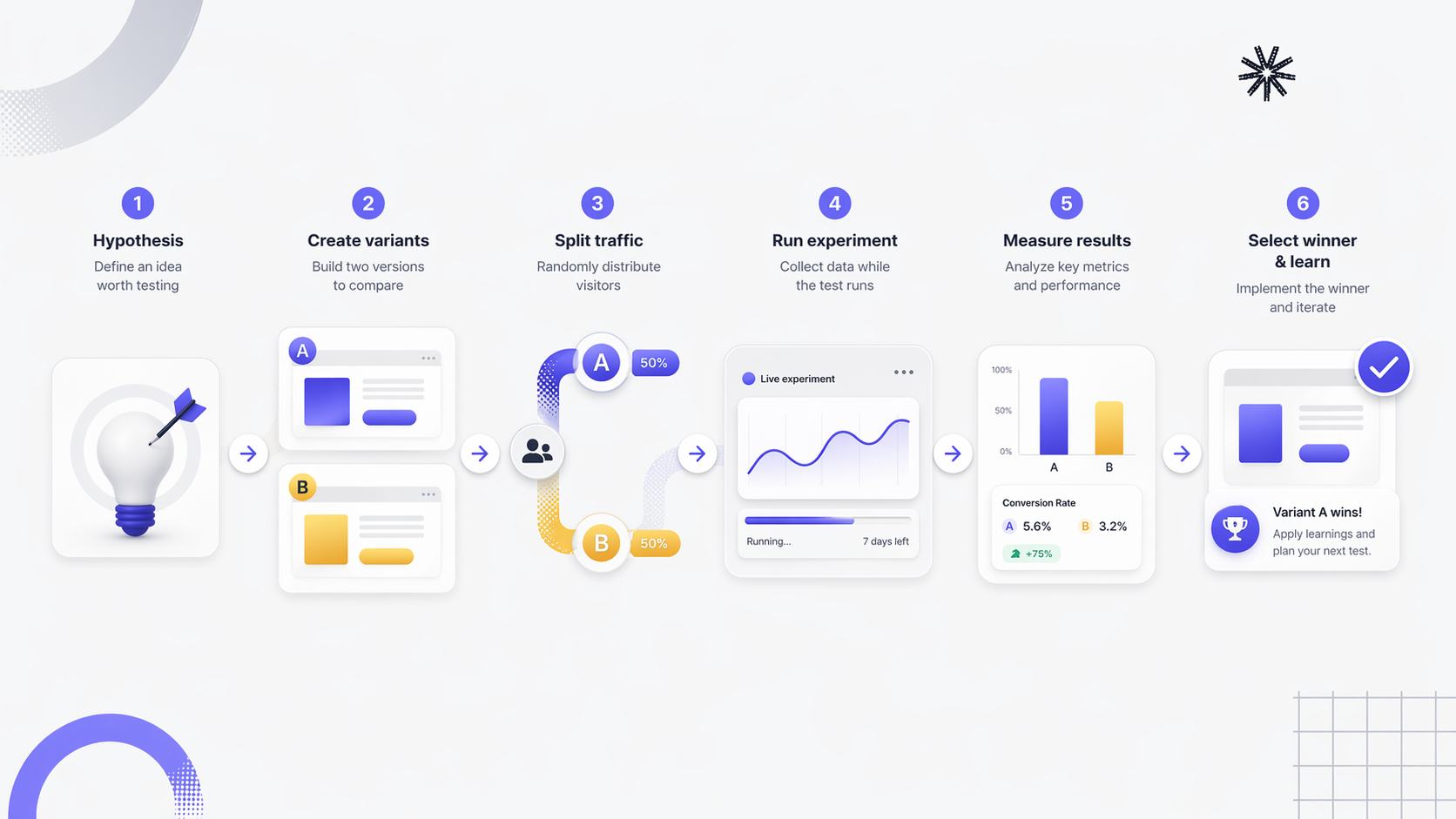

An A/B test follows a structured experimentation process. To generate reliable results, each step must be planned carefully.

1. Define a clear objective

Every A/B test should start with a precise objective linked to a measurable KPI. For example, the goal may be to:

increase the conversion rate on a landing page

improve the click-through rate of a CTA

raise the open rate of an email campaign

reduce bounce rate on a key website page

increase Return on Ad Spend

Without a clear objective, it becomes difficult to design a relevant test or interpret the final results correctly.

2. Formulate a hypothesis

A test should never begin with a random change. It should begin with a hypothesis based on user behaviour, analytics, research, or friction points in the customer journey.

For example: "Changing the CTA text from 'Download now' to 'Get the guide' will increase conversions because the value of the action is clearer."

A strong hypothesis is specific, measurable, and tied to a business outcome.

3. Create the variants

Once the hypothesis is defined, two versions are created:

Version A: the original version

Version B: the new variation

The best practice is to test one variable at a time. This makes it easier to isolate the impact of the change and attribute performance differences to a specific element.

4. Split traffic randomly

Traffic is then distributed between the two versions, often with a 50/50 split.

This randomisation is essential. It ensures that both versions are exposed to comparable audiences, which reduces bias and improves the validity of the experiment. Without proper traffic allocation, results may reflect external factors rather than the actual impact of the tested change.

5. Run the test long enough

An A/B test must run long enough to collect sufficient data. Stopping too early is one of the most common mistakes in experimentation.

Results may appear positive after a few days, but early differences are not always meaningful. A reliable test depends on adequate sample size and enough time to account for natural fluctuations in behaviour across weekdays, devices, traffic sources, and campaign cycles.

6. Analyse the results

Once enough users have been exposed to each version, results can be analysed. Common KPIs include:

conversion rate

click-through rate

revenue per user or per visitor

average order value

bounce rate

form completion rate

engagement metrics

At this stage, performance must be evaluated not only through raw numbers but also through statistical significance. Without it, an observed difference may simply be due to random variation.

Step-by-Step A/B Testing

A/B Testing examples in marketing

A/B testing can be used across the full customer journey. Here are several practical examples.

Landing pages

A company may test two different headlines on a landing page to determine which value proposition drives more sign-ups. A change in wording can significantly affect how users perceive the offer and whether they convert.

CTA buttons

A team may compare "Start free trial" versus "Get started free" to see which CTA produces more clicks and conversions. It can also test button placement, size, or surrounding copy.

Forms

Reducing the number of required fields in a lead generation form may increase form completion rate. Testing long versus short forms is a common way to reduce friction in the conversion path.

Product pages

In e-commerce, a business may test different product page layouts, images, trust signals, or delivery messages to improve add-to-cart rate and purchase completion.

Email campaigns

Email marketers often test subject lines, preview text, or send times. A personalised subject line may outperform a generic one, or a shorter version may increase open rate.

Paid advertising

On platforms such as Google Ads and Meta Ads, advertisers can test different ad creatives, headlines, descriptions, or CTAs to improve click-through rate, conversion rate, and return on ad spend.

These examples show that meaningful gains often come from small, targeted changes rather than major redesigns.

How to set up an effective A/B Test

Running an effective A/B test requires more than using a testing tool. It requires a clear process and proper prioritisation.

Start with the highest-impact opportunities. Not every page or campaign deserves the same level of attention. The best place to begin is where traffic and business impact are already high.

Use existing data to guide priorities. A/B tests should be informed by analytics, heatmaps, product usage data, user feedback, or funnel analysis. These sources help identify friction points and give more relevance to the hypothesis.

Test one change at a time. Testing multiple variables in a simple A/B setup makes interpretation difficult. Keeping the experiment focused improves clarity and supports stronger conclusions.

Estimate sample size before launch. Before starting a test, estimate the number of users or sessions needed to detect a meaningful difference. This depends on the baseline conversion rate and the expected uplift. A test that runs on insufficient traffic may produce inconclusive or misleading results.

Align teams on success criteria. Marketing, product, design, and data teams should agree in advance on:

the primary KPI

the secondary KPIs

the hypothesis

the minimum sample size

the stopping criteria

This avoids post-test debates and helps maintain methodological rigour.

How to analyse A/B Test results

Analysing an A/B test correctly is just as important as setting it up.

Look beyond surface-level changes. A version may appear to win on the primary KPI while causing negative effects elsewhere. For example, a variation may increase clicks but reduce lead quality or average order value. That is why analysis should include both the main KPI and secondary indicators.

Check statistical significance. Statistical significance helps determine whether the difference between two versions is likely to be real rather than random. Without it, there is no reliable basis for implementing the winning version at scale.

Consider segment-level differences. A variation may not perform equally across all audiences. Segmenting results can reveal important patterns, such as differences by:

mobile versus desktop users

paid versus organic traffic

new versus returning visitors

geography

customer value tier

This level of analysis turns a simple test into a deeper source of insight.

Evaluate business impact, not just uplift. A test result should be interpreted in context. A statistically significant uplift may still be too small to matter commercially, while a modest gain on a high-volume page may create major revenue impact.

The right question is not only "Did version B win?" but also "Does this result meaningfully improve business performance?"

AB Testing: How to identify variant winner

A/B Testing vs Multivariate Testing

A/B testing and multivariate testing are related, but they serve different purposes.

A/B testing compares two versions of the same page or element by changing one variable at a time. It is the most common and practical approach for testing a headline, CTA, image, or layout change.

Multivariate testing goes further by testing several changes simultaneously on the same page. For example, a team may test different combinations of headlines, images, and button text at the same time.

Multivariate testing can provide richer insights, but it also requires:

much more traffic

larger sample sizes

more complex analysis

greater operational maturity

For most marketing teams, standard A/B testing is the more accessible and efficient method. Multivariate testing is usually better suited to high-traffic environments with advanced experimentation capabilities.

Common A/B Testing Mistakes to Avoid

A/B testing is powerful, but poor execution can make results unreliable. Here are some of the most common mistakes.

Testing without a hypothesis. Changing elements without a clear reason often produces weak learnings. A test should answer a focused question, not just compare arbitrary versions.

Stopping the test too early. One of the biggest errors is ending a test as soon as one version appears to be ahead. Early signals are not enough. Adequate sample size and statistical significance are essential.

Testing too many changes at once. If several elements are changed simultaneously in a simple A/B test, it becomes impossible to know which change caused the performance difference.

Choosing the wrong KPI. A misleading KPI can distort conclusions. For example, increasing clicks is not enough if the final objective is revenue or qualified leads.

Running tests on low-traffic pages. Low traffic slows down data collection and makes it harder to reach significance. In some cases, the test may never produce a reliable result.

Ignoring segmentation. A global result may hide important differences between user groups. Failing to analyse segments can lead to poor rollout decisions.

Best practices for successful A/B Tests

To make A/B testing a real growth lever, businesses should follow a few core principles.

Test continuously rather than occasionally. The best results come from a steady experimentation process, not isolated one-off tests.

Document everything. Keep track of the hypothesis, variants, KPIs, audience, duration, final result, and key learnings from each experiment.

Build a testing roadmap. Prioritise experiments based on potential impact, effort, and strategic relevance.

Focus on meaningful changes. Some micro-optimisations are useful, but the highest gains often come from improving value proposition clarity, friction points, trust elements, and core journey steps.

Share learnings across teams. A/B testing should not remain isolated within one function. Insights from experimentation can benefit marketing, product, CRM, design, and growth teams.

The limits of A/B Testing

Despite its value, A/B testing is not a universal solution.

It requires enough traffic to reach reliable conclusions. Smaller websites may struggle to generate sufficient sample size within a reasonable time.

It can also be affected by external variables such as seasonality, promotions, media pressure, or shifts in acquisition mix. These factors can influence results even when a test is technically well designed.

In addition, A/B testing is best suited to optimising existing journeys, pages, or elements. It does not replace broader strategic thinking about positioning, audience fit, brand differentiation, or business model choices.

In other words, A/B testing helps improve execution, but it should sit within a larger performance and growth strategy.

A/B Testing and data-driven personalisation

A/B testing becomes even more powerful when combined with audience segmentation and customer data.

Instead of evaluating performance only at a global level, businesses can analyse how different variants perform for specific user segments, such as:

new visitors versus existing customers

mobile versus desktop users

high-value customers versus occasional buyers

users acquired through paid media versus email

customers with different lifecycle stages or levels of engagement

This is where a strong data foundation matters. When customer data is unified across analytics, CRM, advertising, and product touchpoints, teams can design more relevant experiments and measure their impact more precisely.

A Composable Customer Data Platform such as DinMo can support this approach by helping teams unify data from multiple sources, create dynamic segments, and connect experimentation insights with downstream activation. In that context, A/B testing is no longer just a CRO tactic. It becomes a strategic lever for improving marketing performance, personalisation, and customer lifetime value.

Conclusion

A/B testing is one of the most effective ways to improve digital performance through controlled experimentation.

By comparing an original version with a new variation, businesses can identify which changes generate better conversion, engagement, and revenue outcomes. Whether the objective is to optimise a landing page, improve an email campaign, refine a product page, or increase paid media efficiency, A/B testing provides a reliable method for making better decisions.

Its true value, however, comes from consistency. The goal is not simply to run isolated tests. It is to build a long-term experimentation process that helps teams understand users better, optimise key journeys, and improve results over time.

When connected to a broader data-driven strategy, A/B testing becomes a durable engine for marketing optimisation and business growth.

👉 Find out how DinMo helps businesses activate customer data, build smarter segments, and improve marketing performance at scale.